Elixir in Memory Management

Every programming language has its limits and flaws. Memory leaks or resource leaks causes performance issues and memory. In recent days, I found the same issue with Elixir, it might be developers coding bug or it may be rogue module acting insane. Today, we will discuss both issue.

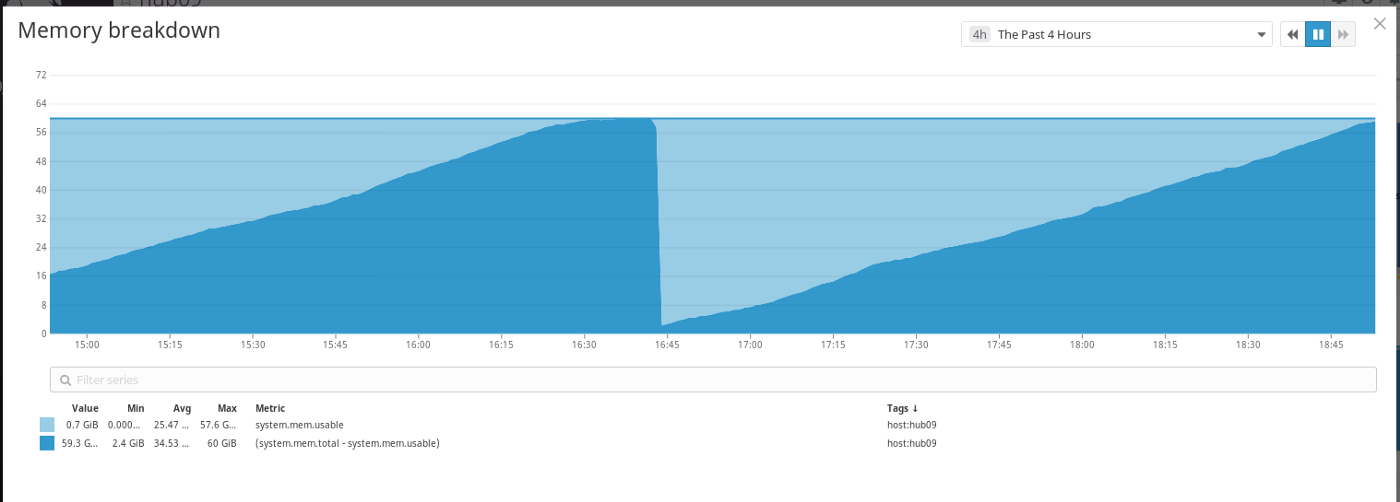

Application started to use loads of memory and system started to crash on its own.

First of all, Elixir is on top of BEAM. Where BEAM its self acts like OS. It has a task manager and memory management. And most of the core modules of Elixir build on similar lines like a dynamic supervisor.

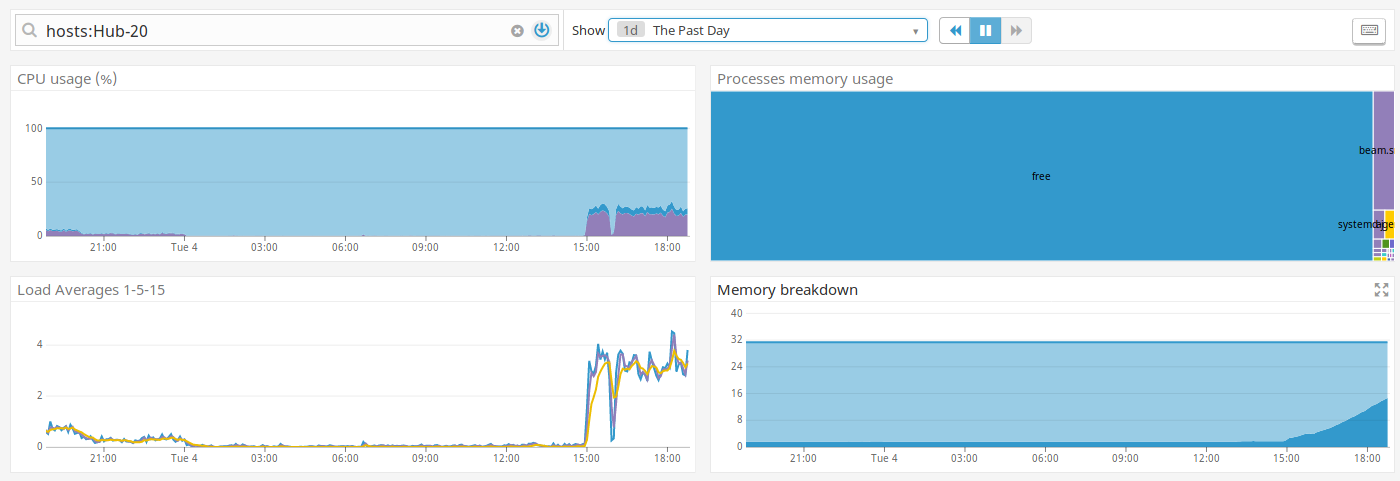

We have had a major issue at our application( we inherited this application), our sysadmin showed us that the application is taking huge resources of both to compute and ram of 32 Gb. But according to our analysis, it should never cross 1 Gb.

This above issue is causing the application to crash. And obviously financial losses ☺. We started to investigate this bizarre issue.

We started with code level, initial is ETS table. That’s the only place where we store data in the application for a few components. After a few stress test application, which never crossed 100 MB, when it’s in peak capacity. So, we ruled out coding level issues.

Now we started to surgical approach. We have to inspect a live server ( production server), to corner the issue.

Not surprisingly, Beam acts like a Mini OS of its own. And we started to enter the world, we didn’t find it difficult to connect to Beam machine which is running.

bin/application remote_console

We can connect one of the nodes, without restarting/distributing run time code, with the above code.

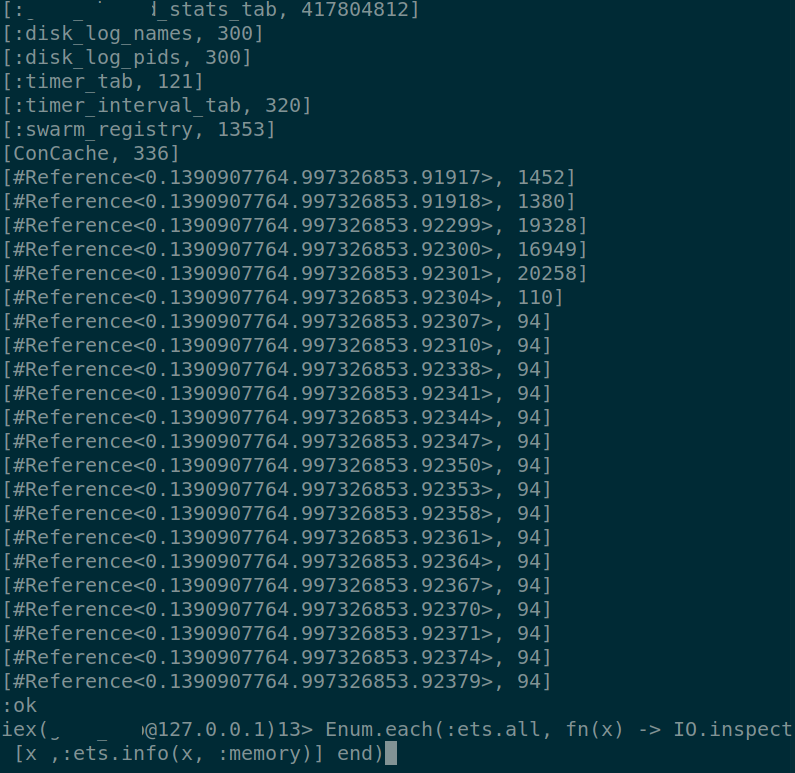

First and foremost to check ETS tables: :erlang.memory gives us a glimpse of memory distribution in Beam ecosystem. We get to know process and ETS are eating huge chunks of ram. Started to debug ETS table first,

Command gives us the list of ETS tables with its name and corresponding memory.

Found that module ex_statd(https://github.com/CargoSense/ex_statsd) used to transfer data from Erlang to DataDog. It is caching data, without flushing it. Saw that module was a deprecated couple of years back and issue in GitHub mentioned about memory leak. And looking for an alternative, observed that even the alternative has a memory leak issue.

Common memory leak, we observed in both the cases, when a packet is sent to the module, it tries to send data to a system port, if the port is not available or not responding at the moment. The packet will be stored in ETS stable and tries to resend. Well, it’s a really good intention to retry. But catch the data when there is no a threshold limit is set to flush the data. When we scale up an application from a few Kb to Gb, it’s a burden on resources.

Now comes the real challenge, debug process memory leaks.

Beam pulled one of its functions from its magic hat. It has a list of processes and we started the trail from it. Ever wonder, how processes can have memory, in a functional language — memory should be released once the function is executed, well just think about it once, before going down. This is below is an example of bad coding practice all the way down to its core.

Genserver is a great tool, a great tool in elixir ecosystem and it gave out some great application, as Linux quotes great power comes great responsibilities. When we misuse, it breaks the application.

Genserver acting insanely, not because of a bug, an developer turned a great feature into a serious bug.

Genserver has states, where we can hold the state of the genserver to reuse/to hold some payload for the next process to take advantage of it. The code was written the way, data inside the state stated to append when a next process comes in. Yes, append. Which is not an idle way to handle it.

Both the issues are hampered the overall application, brought financial losses to the company.

This is an eye-opener for us, be cautious on modules that are inside your application. Now that project grown in size, we started a practice to have an eye on modules.

This is our experience in handling memory leaks in Erlang and please share your experience if you had any similar to this.

Our efforts to use minimal resources still going on, the next segment would be a war on parser specifically JSON and XML, but we are going to have tested them in shear battle test way. Since we have a load of about 5 MB/sec to process, and any fractional improvements would be great for us.